At Aiven, we’ve run different PostgreSQL cloud performance benchmarks since 2016. In this update, we’ve compared write performance metrics for PostgreSQL 13.4 between Amazon Web Services (AWS), Google Cloud Platform (GCP) and Microsoft Azure across four benchmarks.

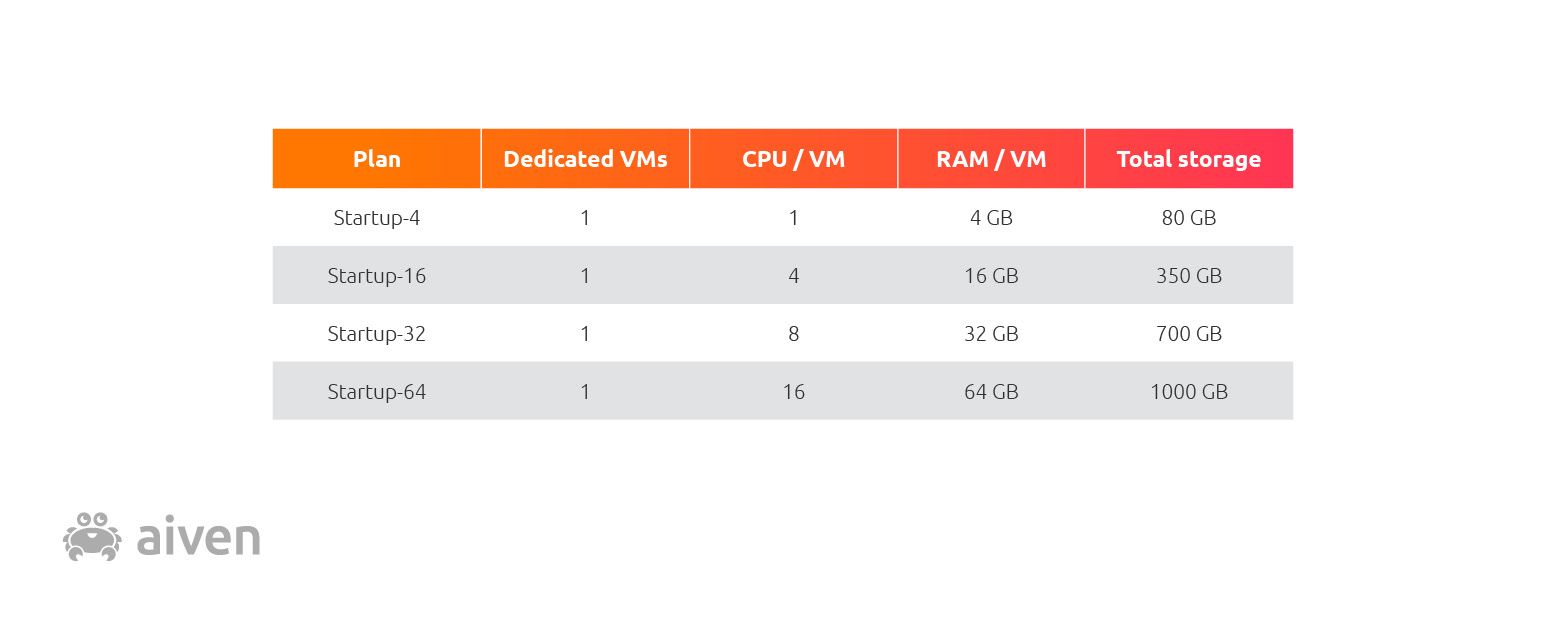

Our four benchmarks measured and compared the performance of PostgreSQL 13.4 running on AWS, GCP and Azure in each of the following four Aiven managed PostgreSQL plan configurations:

Method

To examine performance, we measured the number of database transactions per second while vertically scaling up the nodes. This way the number of nodes remained the same, but the amount of CPUs, RAM and storage on each node increased.

We generated load using pgbench, which we ran on another VM in the same cloud and region. The load generator commands for each plan were as follows:

Loading code...

The variable for the startup plan being tested was the --client switch. The values for the four startup plans were, descriptively enough we hope, 4, 16, 32, and 64.

The load generator instance types used were as follows:

- AWS: m4.large

- GCP: n2d-standard-2

- Azure: standard_DS2_v2

As in a production PostgreSQL (PG) setup, the local SSDs running PG used LUKS full-disk encryption with WAL archiving enabled. The PG instances under test were initialized as follows (shown here without connection parameters):

Loading code...

The --scale parameter was adjusted to accommodate each plan; in this case, the DB size was roughly double the size of RAM, with the possible values of 500, 1500, 2500, and 4500.

To minimize the effects of variable latency on our benchmark, we used a comparable central Europe region for each provider:

- AWS: eu-central-1

- GCP: europe-west3

- Azure: germany-westcentral (* westeurope; see results below)

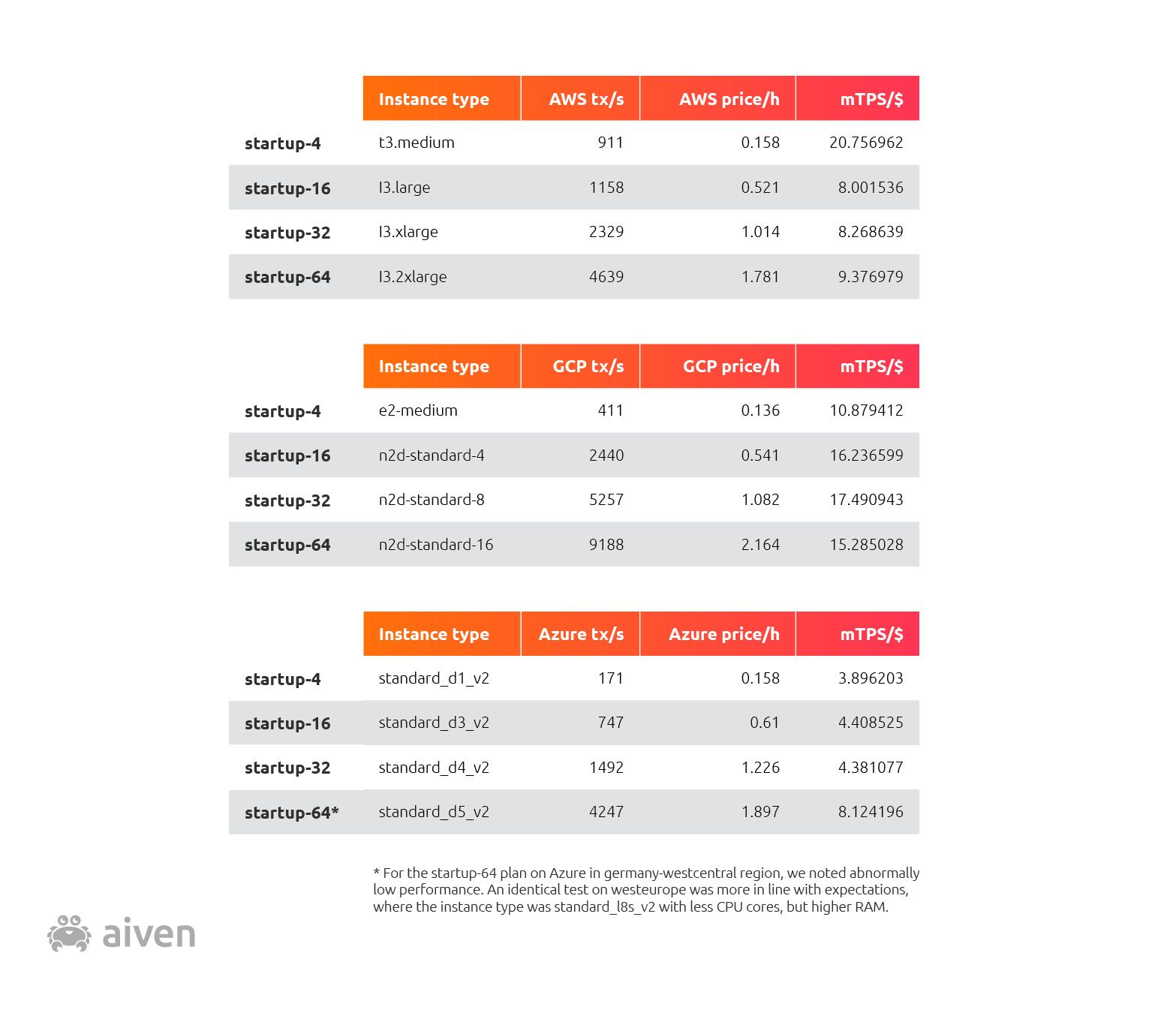

Finally, we concluded the benchmark by calculating the average mTPS/$ per PG plan according to publicly available pricing information.

Transactions per second

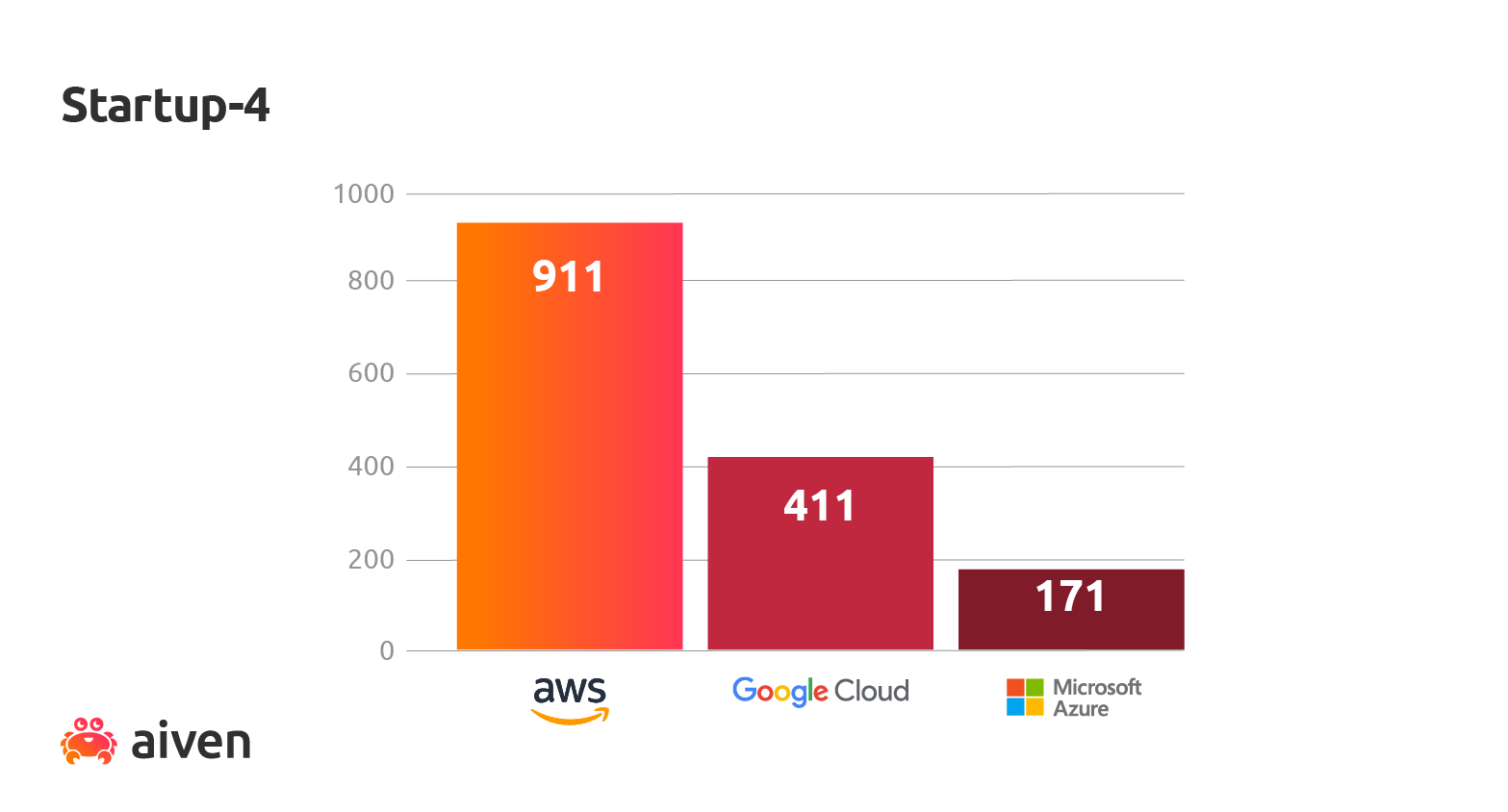

Aiven Startup-4 plans

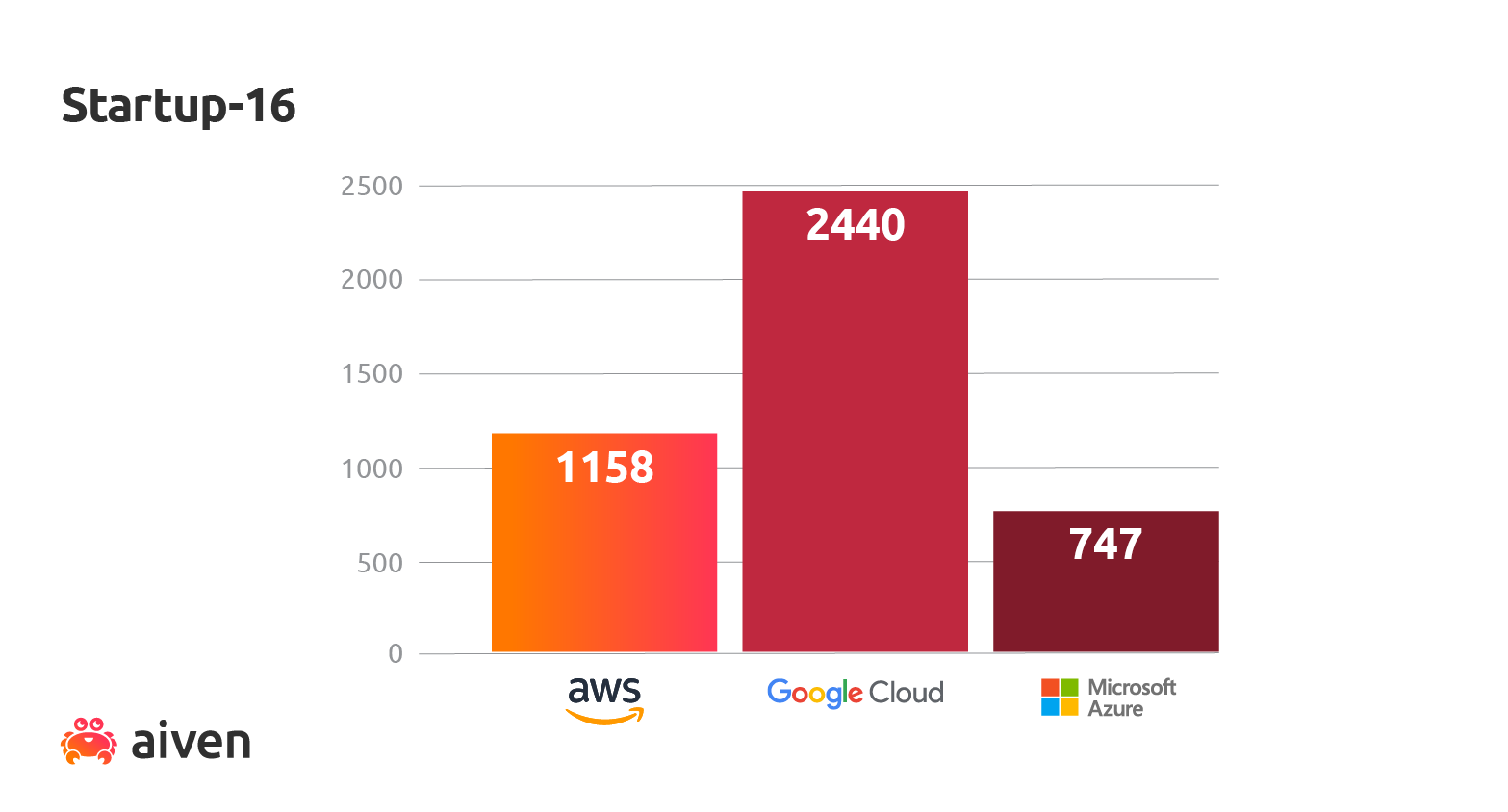

Aiven Startup-16 plans

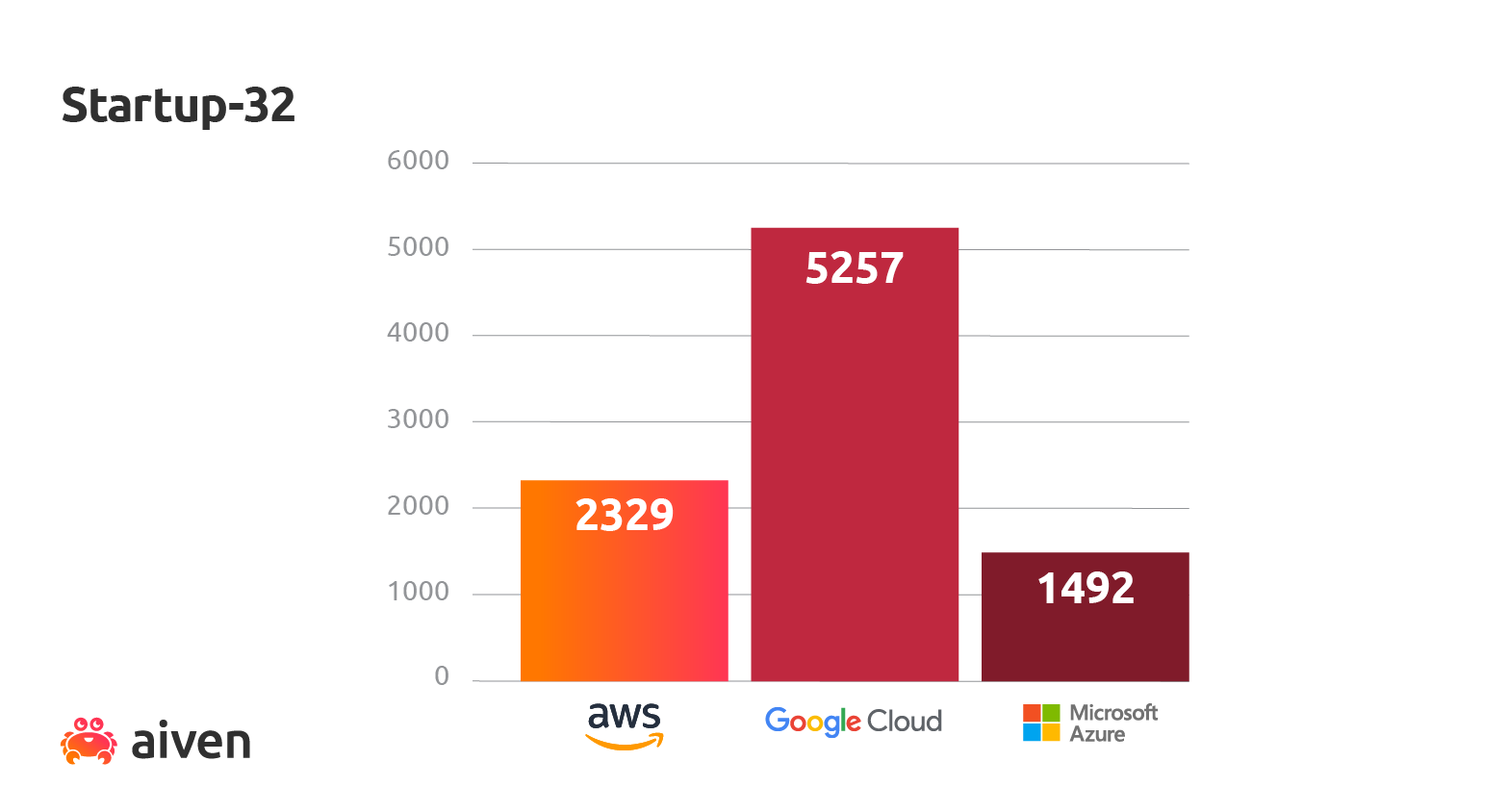

Aiven Startup-32 plans

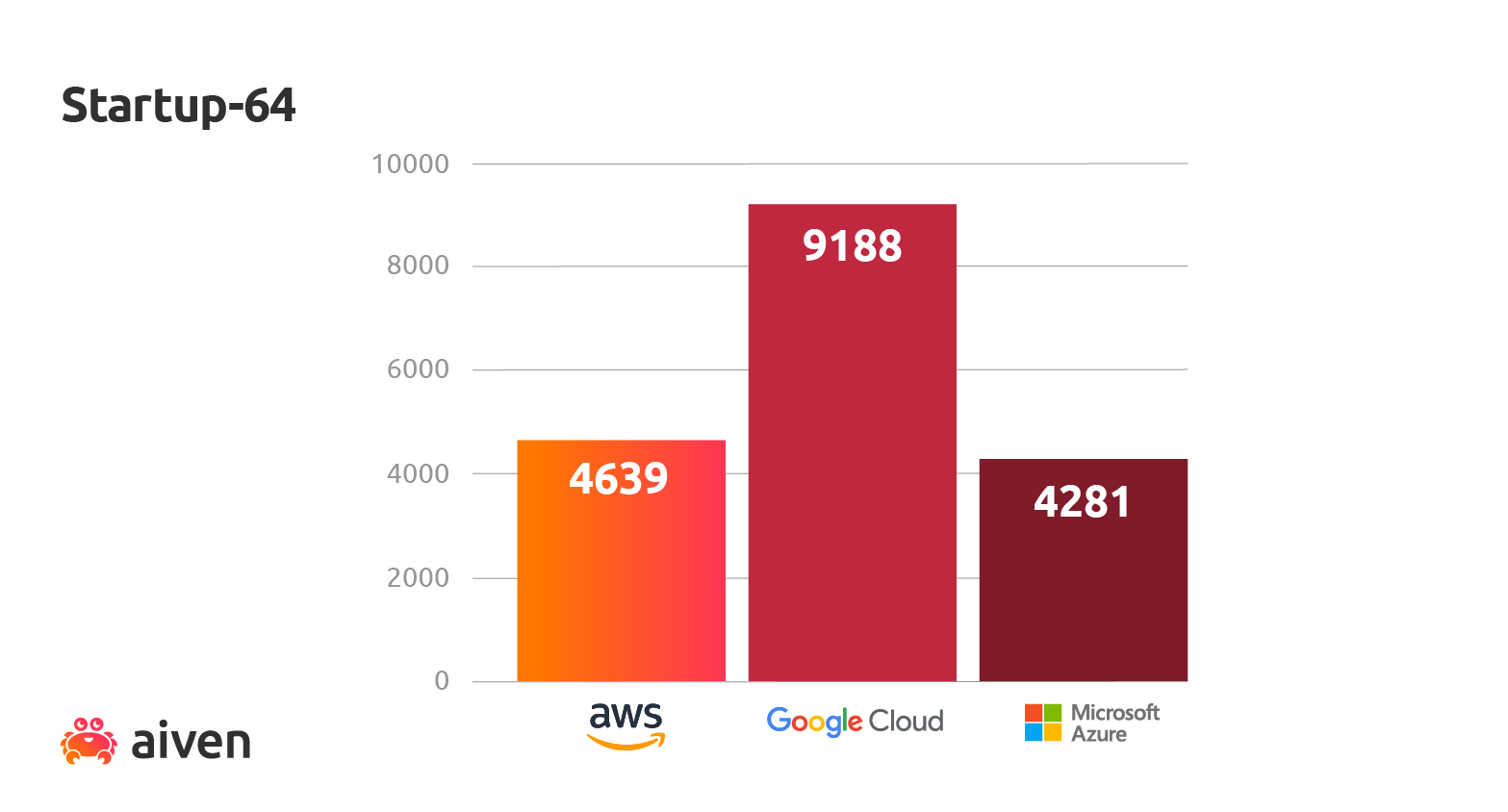

Aiven Startup-64 plans

For the startup-64 plan on Azure in germany-westcentral region, we noted abnormally low performance. An identical test on westeurope was more in line with expectations, where the instance type was standard_l8s_v2 with fewer CPU cores, but higher RAM.

mTPS/$ average per service plan size

Performance value was calculated as an average of millions of transactions per second per dollar or (mTPS/$) for each cloud provider.

We found that, overall, the performance/cost difference between cloud providers quite substantially favored Google. As before, Startup-4 plans were an exception:

Wrapping up

Many factors affect PostgreSQL performance, including latency differences between the regions, but didn’t differentiate for in this benchmark. There is also another factor to consider, which is variably-sized workloads. In this benchmark, the basic idea was to generate a significant load on tested services by using a large number of parallel client connections.

We always recommend that standalone PostgreSQL users identify performance bottlenecks and tune their database configuration, workload or hardware configuration to optimize. However, in our managed PostgreSQL services, PostgreSQL system parameters come preconfigured for typical workloads, and you can try out different hardware configurations by choosing different Aiven for PostgreSQL plans.

We also recommend you to run your own benchmarks, and we will soon publish our Terraform benchmarking scripts that allow easily repeating similar performance tests.

We’ll be publishing more benchmarks like these soon, so stay tuned!

Next steps

Your next step could be to check out Aiven for PostgreSQL.

If you're not using Aiven services yet, go ahead and sign up now for your free trial at https://console.aiven.io/signup!

In the meantime, make sure you follow our changelog and blog RSS feeds or our LinkedIn and Twitter accounts to stay up-to-date with product and feature-related news.