Testing is a necessary pillar in any software development lifecycle. At the same time, it's a fundamentally incomplete process - a cat-and-mouse game. You can't test what you can't imagine and distributed systems have a nasty habit of producing failures that no one on the team imagined.

Even if your team has a great deal of imagination and is extra diligent with testing a lot of various scenarios, there's a great deal of nondeterminism originating from IO, execution scheduling, and other sources of randomness. The network may delay, lose and deliver packets out-of-order; the operating system may schedule threads arbitrarily; disks may fail. We call bugs that originate from such indeterminism "heisenbugs". They're very difficult to uncover even inside a test scenario that's run many times. When one of these bugs hits the system in production, understanding it solely from telemetry is practically impossible.

Business criticality of some systems justifies going the extra mile in trying to find such bugs earlier, in the testing phase, by designing the system and writing the code so that

- the system under test executes less likely code paths on less likely corner case data;

- once a bug is discovered, it can be relatively easily reproduced.

This technique is called deterministic simulation testing.

Deterministic Simulation Testing 101

FoundationDB was one of the pioneers in this more than a decade ago. In his seminal talk Testing Distributed Systems w/ Deterministic Simulation, Will Wilson, one of the FoundationDB developers and now co-founder of Antithesis, explained the idea of deterministic simulation testing applied to their database. The custom I/O code and test system allows to deterministically simulate a wide variety of disk, network, and machine failures. "Deterministically" is important: once a bug is discovered, it's necessary to reproduce this in the dev environment in order to fix it. After that, many systems, such as Dropbox and TigerBeetle employed similar techniques.

In order to fully benefit from deterministic simulation testing, one has to purposefully craft important parts of the system’s code for it. This makes retrofitting such tests into an existing code base difficult. Then came Antithesis. It doesn't require developers to modify their code. Instead, Antithesis uses a deterministic supervisor to run user-provided Docker containers. This means it expects things at the OS-level and does not care what language your code is in, whether it’s native or in some runtime environment, or even whether it’s your own code! (e.g if running other executables during the test)

Owning the supervisor allows them to simulate everything inside, including the system clock ticks. That allows them to simulate a large amount of logical application time through a significantly smaller wall clock time. For example, when WarpStream used Antithesis to discover bugs in their system, they simulated 280 logical hours in just 6 real-world hours. In our own testing at Aiven, we’ve ran upwards of ~200 wall clock hours which resulted in ~9700 logical hours (1.1 years) of application tests.

That is a whole lot of stress on the system! You see, each test run naturally produces a large number of bifurcation points - did the thread switch or not, was the packet lost or delivered, how fast did things happen, etc. Summed together, all these actions form a very complex decision tree.

Antithesis autonomously discovers these points and then tries to traverse this tree through different proprietary heuristics. In theory, this allows it to deliberately test less-traversed code paths and ought to uncover tricky bugs that are less likely to come up in regular happy-path testing.

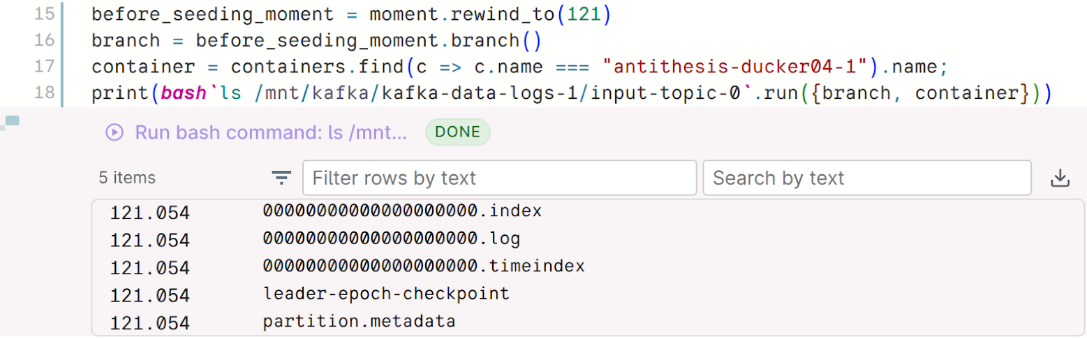

When a bug is found, the deterministic nature of the test allows one to replay and pause it at that exact moment. Their time-travel debugger allows one to explore the system around that time, including running GDB, taking core/heap dumps, capturing network packets, and generally running arbitrary Bash commands.

Testing Diskless Kafka

Developing Inkless–a temporary Apache Kafka fork for implementing KIP-1150: Diskless Topics–we are obliged to put special attention to correctness. We’re adding a bunch of new code paths to the original Kafka code and need to be sure they work correctly themselves. More importantly, we need to ensure they don’t break existing Kafka paths. Apache Kafka already follows a rigorous testing schedule, yet we thought that deterministic simulation testing was an important addition to the test suite. Antithesis was selected as the tool for this.

Antithesis works through docker images. At a high level, the workflow is that you push docker images to a registry which Antithesis has access to, and then you signal Antithesis to run tests on these images through a regular HTTP POST request.

We extended the existing Apache Kafka Ducktape system test suite to serve our goal. Without going into too much detail, we configured a:

- Docker Compose file containing:

- 14 Ducktape workers with Inkless code, running both brokers and clients;

- A PostgreSQL DB (for the Inkless control plane);

- MinIO storage;

- A Ducktape system test which creates Inkless topics, produces to them and asserts that all messages get consumed (inkless_produce_consume_test.py, conceptually similar to Kafka’s end to end system test). It imports the Antithesis SDK for additional asserts

- a driver image to run the system test Ducktape orchestration code

- a VM image to run the brokers and producer/consumer clients

- the regular Postgres 17 image to run the Diskless coordinator

- config image to carry the Docker Compose file

The test spins up an Inkless Kafka cluster and continuously produces and consumes data from Diskless Topics. It continuously asserts some basic invariants about the system:

- that the test never hangs (Antithesis’ reachable operator)

- that every message written is read (Antithesis’ always_or_unreachable operator)

- that bad things don’t happen - messages aren’t read out of order, duplicate offsets are not found, different partition sets are not found (Antithesis’ unreachable operator)

After a bit of fiddling with Python/Docker to get everything to align, we pushed the images and sent an HTTP POST request to Antithesis to run our tests!

Loading code...

The test continuously produced and consumed from a Kafka cluster for a total of ~2200 logical hours in the Antithesis supervisor and found… no issues!

We were relieved, to say the least. Still, as any engineer knows, a passing test can be the most suspicious type of test. It can just mean that you haven’t yet found the problem.

To double-check our assumptions, we ran some of Apache Kafka’s original system tests against upstream Apache Kafka code (not our minimal Inkless fork) under Antithesis. This surprisingly led us to discover KAFKA-19880 - a bug where even under the idempotent producer, the first record batch produced may be delivered out of order with relation to the rest. One would expect this to not happen when idempotence is enabled. Whether it’s lack of documentation or a strict bug remains to be seen - we await a response from the community. It is of concern to us, because while Inkless was not vulnerable to this due to specifics of its current implementation, we are not sure that KIP-1150 won’t be.

In the end, Antithesis didn’t uncover anything for us in Inkless. It is worth noting that we had already run a stripped-down version of deterministic simulation testing prior to this, which did help. Inkless is tested with a property-based writer test, which randomizes inputs like producer request count, message arrival time, message payload shape, and then similarly asserts that invariants about the system were upheld. For this type of test, we had to write (and maintain) a fair amount of code for it that made it awkward at places.

One of the many benefits of Antithesis is that you can test any piece of software with it, without changing anything.

Conclusion

Apache Kafka project is a very mature battle-tested software product whose community has always been diligent about testing and design. This effort shows itself through passing even the harshest state-of-the-art chaos tests that throw every nondeterministic behavior in the book at the system.

We are happy to say that tests so far show that our Inkless fork, which implements the yet-to-be-accepted Diskless Topics feature, seems similar in terms of reliability.

Of course, a passing test suite is never the end of the story. Testing is a constant effort that every team must continuously invest in. On that note, stay tuned for our next post that will stress test Inkless - benchmarks!

Table of contents