Sep 6, 2018

The current data and event ecosystem

Read this post to find out about some of the most popular components you can use to build your modern event-driven data pipeline.

John Hammink

|RSS FeedDeveloper Advocate at Aiven

Nowadays, everyone is talking about building data platforms (or data pipelines) to answer specific business questions. While information and intelligence have always been critical to business, the sheer volume, velocity, and complexity of such data has exploded.

Everything from applications, machinery, infrastructure, clothing, smartphones, and even automotive electronics collect information. With more than 13 billion devices and systems connected in 2018 projected to grow to 70 billion by 2020, understanding this ecosystem is essential to staying competitive.

In many cases, it's often the data (combined with the platform) that is the product. In this post, we'll get a grasp on today's data and event ecosystem by looking at some of the tools that others are using for each component of the data pipeline.

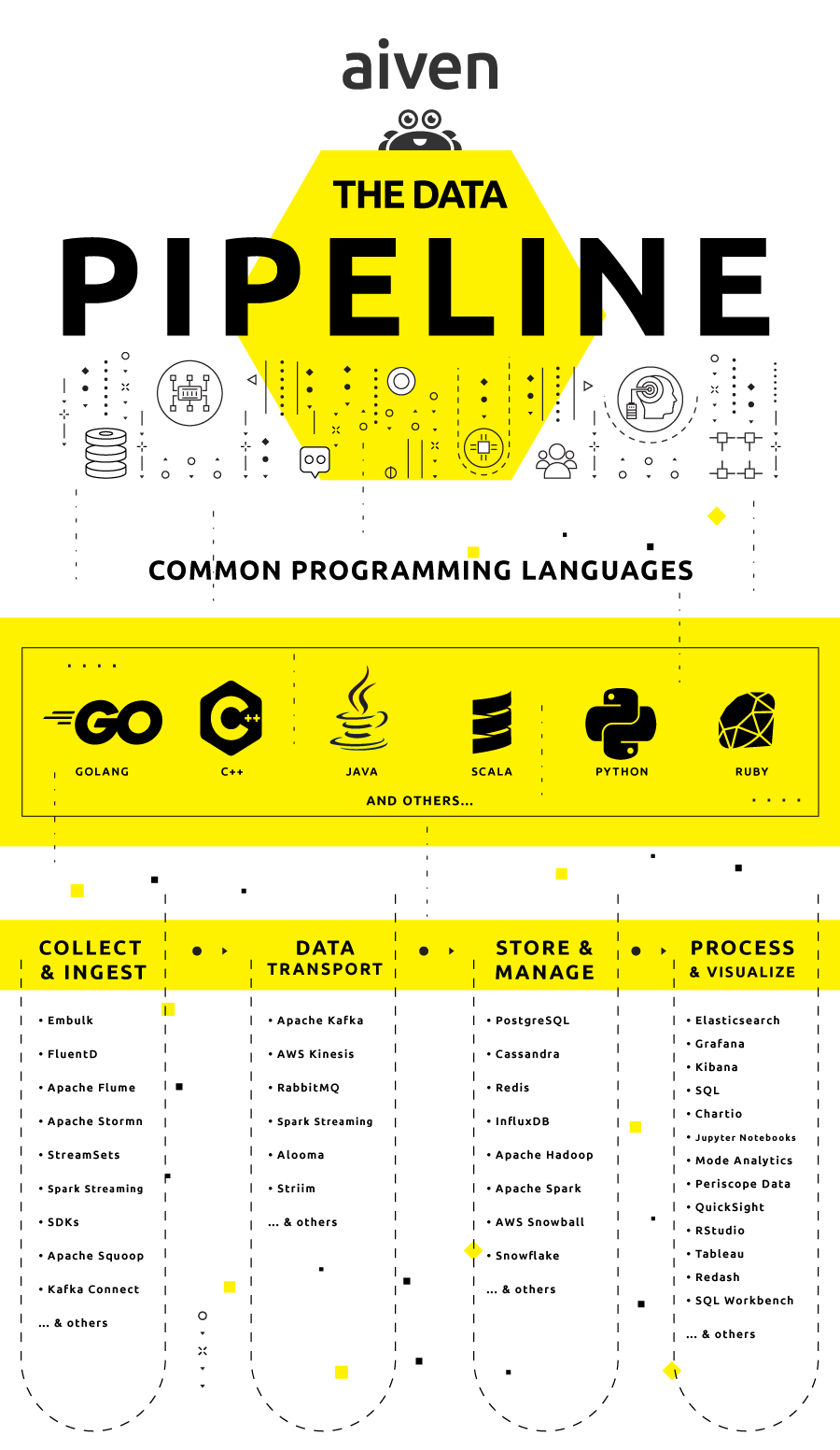

We've broken the data pipeline down into four sections: Ingestion, Transport, Storage and Management, as well as Processing and Visualizing. Before we start, let's take a bird's-eye view of the pipeline.

The data ingestion ecosystem

At the beginning of any data pipeline, data ingestion involves procuring events from sources (applications, IoT devices, web and server logs, and even data file uploads) and transporting them into a data store for further processing.

Data ingestion can be continuous, asynchronous, batched, in real time, or some combination thereof. There are many data ingestion technologies that can take raw data from disparate sources and upload them to a single source of truth.

Embulk

Embulk is a parallel bulk data uploader built around a core and a series of community-contributed input and output plugins that supports bulk data transfer between various data stores, databases, NoSQL stores, and cloud services.

Embulk supports a number of now-standard features of data ingestion, such as guessing input file formats, parallel & distributed execution, all-or-nothing transaction control, and resuming after an upload stalls.

StreamSets

With over 2 million downloads, StreamSets Data Collector is a popular, "low-latency ingest infrastructure tool that lets you create continuous data ingest pipelines with a drag and drop UI." Licensed under Apache 2.0 open-source, StreamSets is a good way to set up data ingestion graphically, with minimal code and configuration.

Fluentd

Fluentd, a "Unified Logging Layer", is an open-source, streaming data collector, that decouples data sources from backend systems. A favorite of Yukihiro Matsumoto, creator of Ruby, Fluentd also consists of a community-maintained core combined with input and output plug-ins like Embulk. FluentBit is the version maintained for embedded systems.

Apache Sqoop, Flume, and Spark

Apache Sqoop is a tool for transporting bulk data between Apache Hadoop and structured datastores like relational databases. By offloading certain tasks (such as extract, transform, load operations) onto Hadoop, it can make data warehouses more efficient.

Apache Flume is "a distributed, reliable and available service for efficiently collecting, aggregating, and moving large amounts of log data."

Based on streaming data flows, and geared toward Hadoop, Flume acts as a buffer between data producers and consumers — centralized data stores — when incoming data velocity exceeds the write capacity of the stores. Flume is distributed, scalable, and fault-tolerant.

As a component of Apache Spark, Spark Streaming combines streaming with batch and interactive queries. Spark Streaming can read data from HDFS, Kafka, Twitter and ZeroMQ] and uses Zookeeper and HDFS for high availability ingestion.

Analytics data is collected when event code is fired, and SDKs are generally available for any number of ingestion, storage and management tools in most major programming languages.

The data transport ecosystem

Data transport overlaps somewhat with data ingestion, but "ingestion" revolves around getting data extracted from one system and into another, while "transport" concerns getting data from any location to any other.

Message brokers are a key component in data transport; their raison d'etre is to translate a message from a sender's protocol to that of a receiver, and possibly transform messages prior to moving them.

Apache Kafka is a high-throughput distributed messaging system for consistent, fault-tolerant and durable message collection and delivery. Kafka producers publish streams of records or topics to which consumers subscribe. These streams of records are stored and processed as they occur.

Kafka is typically used for a few broad classes of applications:

- Real-time streaming data pipelines between systems or applications;

- Real-time streaming applications that transform streams of data;

- Real-time streaming applications that react to streams of data.

Compared to earlier, simpler messaging systems like ZeroMQ or RabbitMQ, Kafka generally has better throughput, integrated partitioning, and fault tolerance, making it excellent for large-scale message handling.

Kafka's use has expanded to include everything from commit logs, to website activity tracking, to stream processing. (Find out more about Aiven's fully managed Kafka offering).

Part of the Kafka family, Kafka Connect is a good alternative for data ingestion and export tasks. It is a framework with a number of available connectors to interact with systems and services ranging from change data capture from popular databases to MQTT and for example, Twitter.

Amazon's equivalent is Amazon Kinesis, a real-time data processing platform offered on Amazon Web Services. As a fully managed solution, it can handle widely varying amounts of ingest data (without worrying about scaling); it ingests, buffers, and processes streaming data in real time.

Data storage and data management ecosystem

No one talks about Big Data or its ecosystem without including Apache Hadoop and Apache Spark. Hadoop is a framework that can process large data sets across clusters; Spark is "a unified analytics engine for large scale data processing."

Both are widely adopted, often used together, and have strong community support with open-source and commercial versions available. However, as both are early evolutionary steps in big data, they come with their unique problems.

For example, with Hadoop, aside from the well-known talent gap, users have found that the MapReduce programming paradigm isn't a good match for all problems. These include the typically iterative tasks of a data scientist's exploratory work.

And Spark, though it can be much faster than Hadoop (with in-memory processing), and supports SQL queries (taking the Hadoop/Spark stack comfortably out of the data engineer's domain into that of analysts, data scientists and even managers), both technologies require infamously complicated configuration chops.

Plus, if you've ever used Hadoop and Spark together, you’re probably well aware of the "small files problem" — Hadoop File System (HDFS) generally works better with a small number of large files rather than vice versa.

Nonetheless, pipelines have emerged with other data stores and management methods; some established, some new. Let's look at a few that Aiven supports:

PostgreSQL

PostgreSQL is an open-source object-relational database management system emphasizing extensibility and standards compliance that has been around so long, it's become a standby for companies ranging from manufacturing to IoT. Aiven's fully managed PostgreSQL service can be found here.

Redis

Redis is a superfast variant of the NoSQL database known as a key-value store. As such, it's an extremely simple database that stores only key-value pairs and serves search results by retrieving the value associated with a known key.

Redis's speed and simplicity make it well-suited for embedded databases, session caches, or queues. In fact, it's often used in conjunction with message brokers, or as a message broker itself. The Aiven Redis service can be found here.

Cassandra

If you're working with large, active data sets, and need to tweak the tradeoff between consistency, availability and partition tolerance, then Apache Cassandra may be your solution. Because data is distributed across nodes, when one node — or even an entire data center — goes down, the data remains preserved in other nodes (depending on the consistency level setting).

As a wide column store, Cassandra is schema-agnostic and stores data in column families resulting in a multi-dimensional key-value store. Technically schema-free and "NoSQL", Cassandra uses a SQL variant called CQL for data definition and manipulation, making administration easy for RDBMS experts. Aiven Cassandra is managed, freeing users from on-prem concerns such as cluster management and scaling.

Data visualization

When you want to develop insights and reach conclusions to support your hypotheses, you're in the domain of data scientists. Data visualization tools and dashboards also support managers, marketers, and even end consumers, but there are simply too many such tools, with too many areas of specialty, to possibly cover in this article.

When time-series data needs to be plotted to a graph and visualized — to monitor system performance, say, or how a particular variable or group of variables has performed over time, then a solution like Grafana might be just the ticket. Although originally built for performance and system monitoring, it now directly supports more than 40 data sources and 16 apps.

Other tools

Often the need to handle, search and process raw text arises. Based on Lucene, Elasticsearch is a distributed document and full-text indexing solution that supports complex data analytics in real time. Aiven's enhanced Elasticsearch offering is frequently used aside other Aiven services such as Aiven Kafka, PostgreSQL, and Redis.

Where to learn more

Here are a few resources where you can learn more about data pipelines and related technologies:

General

On Data Ingestion

On Message Brokers and Data Transport

On Data Storage and Management

What is a key-value store?

What is a time-series database?

On Data Visualization

Wrapping up

As you can see, the data and event ecosystem provides a vast number of components to create a data pipeline from. To get a feel for real use cases, you can find examples of companies who have built their data pipelines using the Aiven platform here.

With Aiven you too can build your own data pipelines with just a few clicks! We have the infrastructure and expertise to help you get started.

If you enjoyed this post, then stay tuned for our coverage on the 7 challenges that a data pipeline must solve. In the meantime, try our platform by signing up for a no-commitment, free trial here. You should also join our blog and changelog RSS feeds; or follow us on Twitter or LinkedIn to stay up-to-date.

Related resources

Jun 10, 2021

Is Apache Kafka® a database? Can I throw out my Postgres and use Kafka instead? Is Kafka the new Swiss Army Knife of data? Find out what the debate is about.

Sep 2, 2022

Aiven has made life easier for Terraform users since 2017. Join us on a brief stroll down memory lane and learn how and why we got here.

Dec 20, 2021

0day? How about 0december! Aiven's CISO recaps the recent vulnerabilities and what Aiven did about them.